Streamlining Recommendation Model Training on AMD Instinct™ GPUs#

Recommendation model training and inference workloads represent a significant portion of computational requirements across industries including e-commerce, social media and content streaming platforms. Unlike LLMs, recommendation models result in to complex and often imbalanced communication across GPUs, along with a higher load on the CPU-GPU interconnect. The ROCm training docker [1] now includes essential libraries for recommendation model training. This blog demonstrates the functionality and ease of training recommendation models using ROCm, along with suggestions for improved configuration of these workloads. We also highlight the inherent benefits of the large HBM size on AMD Instinct™ GPUs for recommendation workloads.

Deep Learning Recommendation Model (DLRM)#

DLRMv2 [2] is a representative model for recommendation systems that handles dense (numerical) and sparse (categorical) features along separate paths, then combines them for click-through rate prediction. The architecture includes a bottom MLP for dense features, embedding tables that transform sparse categorical features into dense representations, and a top MLP for joint processing.

Sparse Embeddings#

Embeddings are stored in large embedding tables that may reside entirely on a single GPU, sharded across multiple GPUs, or partially offloaded to CPUs. Processing a single input can require fetching table entries spread across different GPUs, which creates complex and imbalanced communication patterns. TorchRec provides primitives to handle these distributed embeddings, and FBGEMM accelerates the lookup operations. The ROCm training docker comes pre-installed with libraries needed for high-performance computation, communication and sparse embedding operations for recommendation workloads.

Configuration of Table Sharding#

Selecting the appropriate sharding scheme for a given set of tables is key to improving performance. We summarize some of the sharding schemes implemented in TorchRec, as shown in figure 1 below. In the simplest data parallel (DP) scheme, each rank holds a complete copy of the table. Table-wise (TW) places a table on a single rank. Row-wise (RW) is preferred for tables with a larger number of rows, while Column-wise (CW) sharding is preferred when the embedding dimension is large. Each of these sharding schemes offers a trade-off between communication complexity, load imbalance and memory. The sharding planner in TorchRec uses a performance model based on the system configuration (interconnect bandwidths, memory) to distribute tables across ranks for optimized end-to-end training and inference performance.

With communication load being a bottleneck on recommendation workloads, the large HBM on AMD GPUs allows for placing a higher fraction of the tables locally via DP. It is thus essential to configure the system specifications for the planner to select the optimal scheme through the Topology class. These impacts become more pronounced when running training or inference on larger multi-node setups. For example, for a single node consisting of 8x MI300 GPUs:

# Configuring the Topology class for a single node with 8x MI300 GPUs:

hbm_cap = 192 * 1024 * 1024 * 1024 # 192GB MI300X memory size

ddr_cap = 1024 * 1024 * 1024 * 1024 # Up to 1TB host memory per GPU (System Specific)

hbm_mem_bw = 5.3 * 1024 * 1024 * 1024 * 1024 / 1000 # 5.3 TB/s MI300X

ddr_mem_bw = 0.8 * 460.8 * 1024 * 1024 * 1024 / 1000 # ~370 GB/s (System Specific: For e.g., 80% of the theoretical 460.8 GB/s of AMD EPYC™ 9654)

hbm_to_ddr_mem_bw = 128 * 1024 * 1024 * 1024 / 1000 # 128 GB/s (PCIe gen5x16)

intra_host_bw = 0.8 * 7 * 64 * 1024 * 1024 * 1024 / 1000 # ~336 GB/s (80% of 7x64 GB/s using AMD's xGMI)

topology = Topology(

local_world_size=get_local_size(),

world_size=dist.get_world_size(),

compute_device=device.type,

hbm_cap=hbm_cap,

ddr_cap=ddr_cap,

hbm_mem_bw=hbm_mem_bw,

ddr_mem_bw=ddr_mem_bw,

hbm_to_ddr_mem_bw=hbm_to_ddr_mem_bw,

intra_host_bw=intra_host_bw,

)

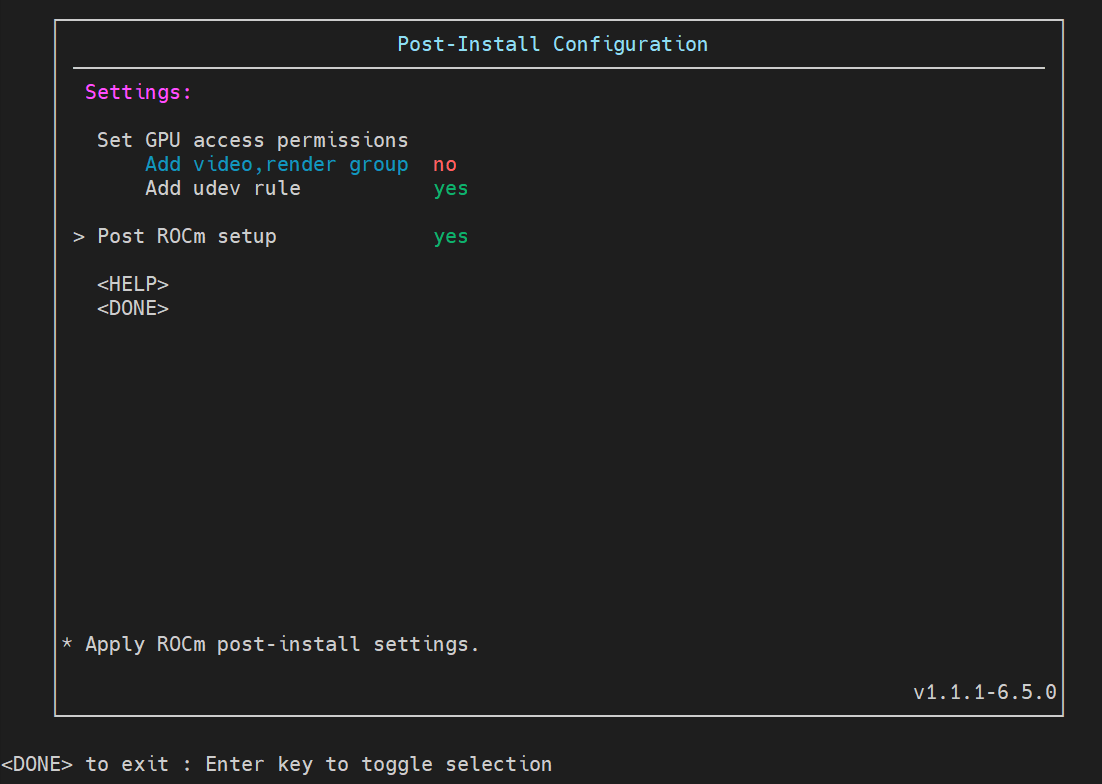

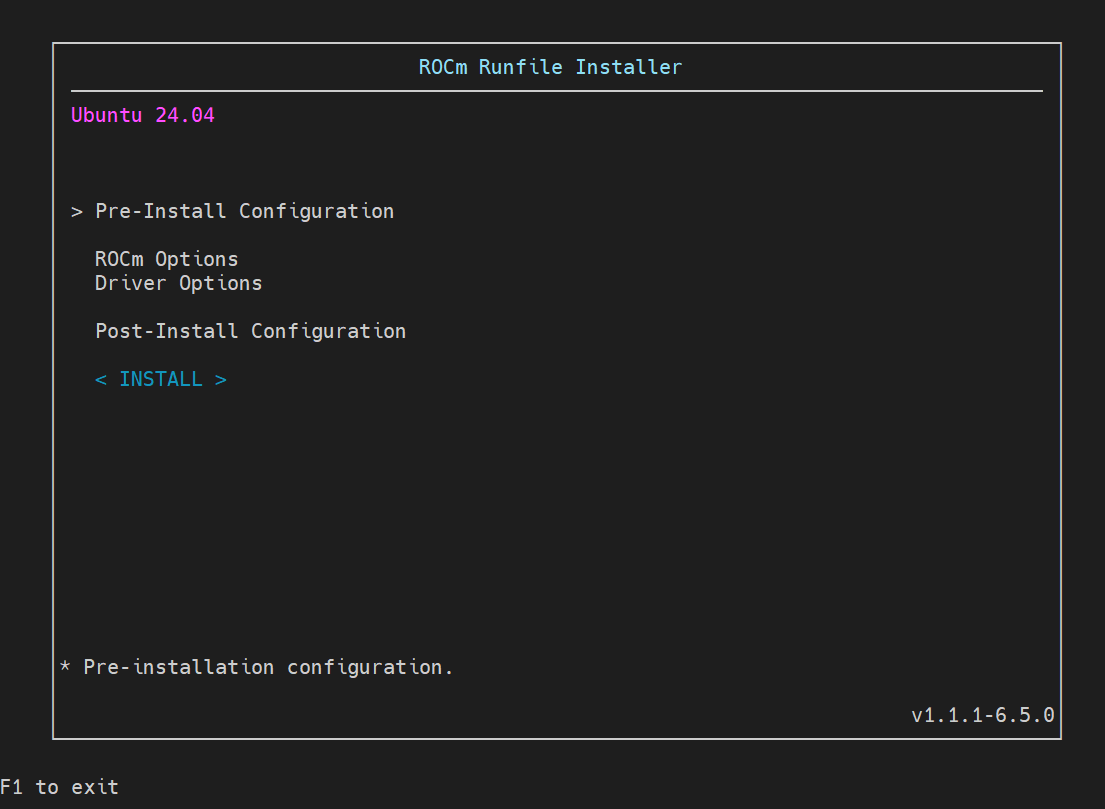

DLRM Training Using ROCm Training Docker#

We now provide a quick demonstration of the ease of training recommendation models on ROCm. We use the ROCm training docker which comes pre-installed with the required libraries. We have provided an example for single node DLRM training using the DLRM_v2 model at AMD-AGI/DLRMBenchmark.

Clone the repository:

git clone https://github.com/AMD-AGI/DLRMBenchmark.git

Pull the ROCm training docker container:

docker pull rocm/primus:v26.1

Launch the container. Ensure all required paths, including the codebase, are mounted (similar to /home_dir/).

docker run -d \ --ipc=host \ -v /dev/shm:/dev/shm \ -v /home_dir/:/home_dir/ \ -e USER=$user -e UID=$uid -e GID=$gid \ --device=/dev/kfd \ --device=/dev/dri \ --device=/dev/infiniband \ --ulimit memlock=-1:-1 \ --shm-size 32G \ --cap-add=SYS_PTRACE \ --security-opt seccomp=unconfined \ --group-add video \ --network=host \ --name dlrm_demo \ -it rocm/primus:v26.1 \ tail -f /dev/null

Start interactive shell session within container:

docker exec -it dlrm_demo bash

Launch training via the single node training script in the repository. Note that a training configuration is available at ./training_config.sh.

./launch_training_single_node.sh

During a successful run, the training log shows stable performance:

Epoch 0: 8%|▊ | 75/1000 [02:01<00:42, 21.78it/s]

Mean loss: 0.69320858

Mean loss: 0.69374704

Mean loss: 0.69327664

Epoch 0: 8%|▊ | 78/1000 [02:01<00:41, 22.03it/s]

Mean loss: 0.69344836

Mean loss: 0.69318008

Mean loss: 0.69339764

The train_config.sh file can be updated to point to the Criteo-1B data if available. The training loss then converges, as shown in figure 2 below:

Figure 2: DLRM Training Convergence#

Summary#

Recommendation model training on ROCm has been simplified through the training docker, pairing TorchRec and FBGEMM with high-performance communication and computation kernels. Properly configuring the TorchRec sharding planner allows the system to exploit AMD Instinct™ GPU’s large HBM to favor local placement and reduce communication bottlenecks. We provided an example of training recommendation models through the DLRM workload. These capabilities extend to larger multi-node deployments, where system-aware sharding can sustain performance at scale. With this, we hope that teams can accelerate recommendation model development and achieve strong end-to-end performance on AMD GPUs and ROCm!

References#

[1] Training a model with PyTorch on ROCm

[2] Maxim Naumov and Dheevatsa Mudigere and Hao-Jun Michael Shi and Jianyu Huang and Narayanan Sundaraman and Jongsoo Park and Xiaodong Wang and Udit Gupta and Carole-Jean Wu and Alisson G. Azzolini and Dmytro Dzhulgakov and Andrey Mallevich and Ilia Cherniavskii and Yinghai Lu and Raghuraman Krishnamoorthi and Ansha Yu and Volodymyr Kondratenko and Stephanie Pereira and Xianjie Chen and Wenlin Chen and Vijay Rao and Bill Jia and Liang Xiong and Misha Smelyanskiy (2019). Deep Learning Recommendation Model for Personalization and Recommendation Systems. https://arxiv.org/abs/1906.00091

[3] Mudigere, Dheevatsa and Hao, Yuchen and Huang, Jianyu and Jia, Zhihao and Tulloch, Andrew and Sridharan, Srinivas and Liu, Xing and Ozdal, Mustafa and Nie, Jade and Park, Jongsoo and Luo, Liang and Yang, Jie (Amy) and Gao, Leon and Ivchenko, Dmytro and Basant, Aarti and Hu, Yuxi and Yang, Jiyan and Ardestani, Ehsan K. and Wang, Xiaodong and Komuravelli, Rakesh and Chu, Ching-Hsiang and Yilmaz, Serhat and Li, Huayu and Qian, Jiyuan and Feng, Zhuobo and Ma, Yinbin and Yang, Junjie and Wen, Ellie and Li, Hong and Yang, Lin and Sun, Chonglin and Zhao, Whitney and Melts, Dimitry and Dhulipala, Krishna and Kishore, KR and Graf, Tyler and Eisenman, Assaf and Matam, Kiran Kumar and Gangidi, Adi and Chen, Guoqiang Jerry and Krishnan, Manoj and Nayak, Avinash and Nair, Krishnakumar and Muthiah, Bharath and khorashadi, Mahmoud and Bhattacharya, Pallab and Lapukhov, Petr and Naumov, Maxim and Mathews, Ajit and Qiao, Lin and Smelyanskiy, Mikhail and Jia, Bill and Rao, Vijay (2022). Software-hardware co-design for fast and scalable training of deep learning recommendation models. ISCA ‘22: Proceedings of the 49th Annual International Symposium on Computer Architecture. Pages 993 – 1011.

Disclaimers#

Third-party content is licensed to you directly by the third party that owns the content and is not licensed to you by AMD. ALL LINKED THIRD-PARTY CONTENT IS PROVIDED “AS IS” WITHOUT A WARRANTY OF ANY KIND. USE OF SUCH THIRD-PARTY CONTENT IS DONE AT YOUR SOLE DISCRETION AND UNDER NO CIRCUMSTANCES WILL AMD BE LIABLE TO YOU FOR ANY THIRD-PARTY CONTENT. YOU ASSUME ALL RISK AND ARE SOLELY RESPONSIBLE FOR ANY DAMAGES THAT MAY ARISE FROM YOUR USE OF THIRD-PARTY CONTENT.