Developers Blogs#

From Build to Benchmark: ONNX Model Serving with Triton Inference Server on AMD GPUs

Step-by-step guide to building, deploying, and benchmarking ONNX models with Triton Inference Server and MIGraphX on AMD GPUs

ROCm 7.13: Expanding Hardware, Tools, and Reach

Explore what's new in the ROCm 7.13 release, featuring expanded hardware support, GPU virtualization, enhanced developer tooling, and TheRock's modular packaging.

vLLM-ATOM: Unlocking Native AMD Performance in the vLLM Ecosystem

Use ATOM as an out-of-tree vLLM plugin to keep vLLM compatibility while enabling AMD-optimized attention, model execution, and multi-model support including Kimi-K2.5.

Primus Projection: Estimate Memory and Performance Before You Train

Learn how to use the Primus projection tool to estimate memory and performance for large-scale LLM training on AMD Instinct™ accelerator platforms.

Building Robotics Applications with Ryzen AI and ROS 2

This blog post gives a walkthrough of how to deploy a robotics application on the AI PC integrated with ROS - the robot operating system. We showcase Ryzen AI CVML Library to do perception tasks like depth estimation and develop a custom ROS 2 node which allows easy integration with the ROS ecosystem and standard components.

Continuing the Momentum: Refining ROCm For The Next Wave Of AI and HPC

ROCm 7.1 builds on 7.0’s AI and HPC advances with faster performance, stronger reliability, and streamlined tools for developers and system builders.

ROCm 7.0: An AI-Ready Powerhouse for Performance, Efficiency, and Productivity

Discover how ROCm 7.0 integrates AI across every layer, combining hardware enablement, frameworks, model support, and a suite of optimized tools

Day 0 Developer Guide: Running the Latest Open Models from OpenAI on AMD AI Hardware

Day 0 support across our AI hardware ecosystem from our flagship AMD InstinctTM MI355X and MI300X GPUs, AMD Radeon™ AI PRO R700 GPUs and AMD Ryzen™ AI Processors

Customizing Kernels with hipBLASLt TensileLite GEMM Tuning - Advanced User Guide

Master hipBLASLt TensileLite Tuning. Learn to build custom kernels that deliver 150%-250% faster GEMM performance on AMD Instinct™ MI300X GPUs

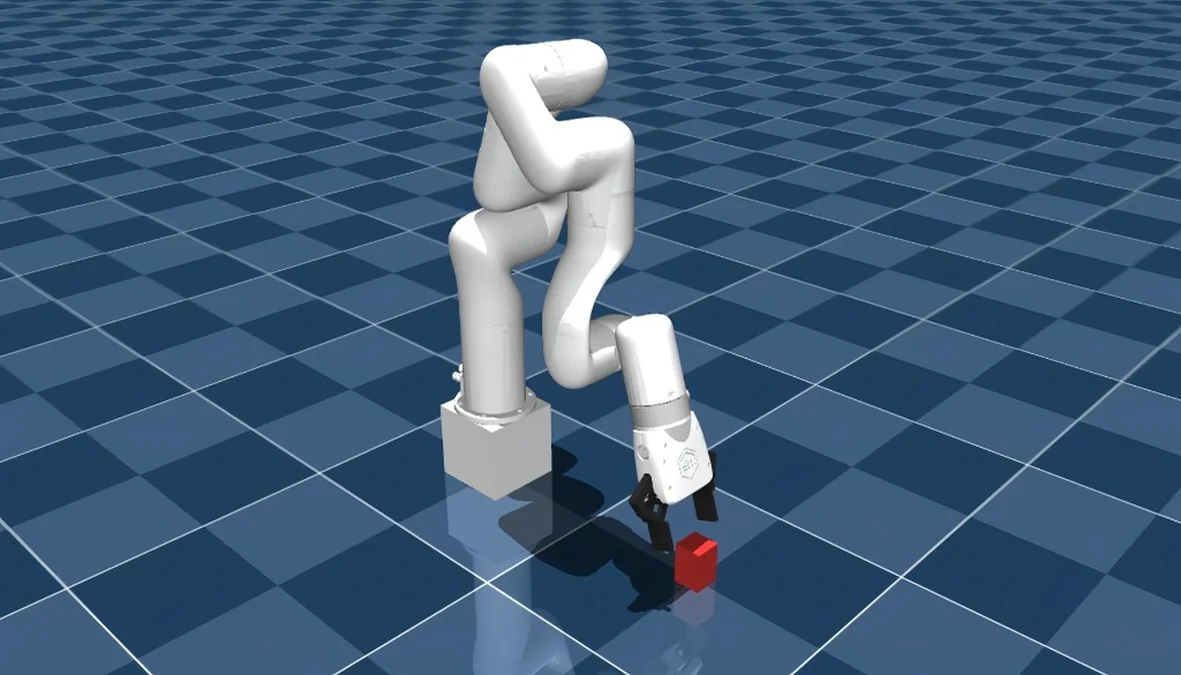

Training a Robotic Arm Using MuJoCo and JAX on AMD Hardware with ROCm™

A complete guide to training an RL-based pick-and-lift robotic arm in MuJoCo with JAX, running on AMD hardware via ROCm.

Edge-to-Cloud Robotics with AMD ROCm: From Data Collection to Real-Time Inference

This blog demonstrates a comprehensive Edge-to-Cloud robotics AI solution powered by the AMD ecosystem and the Hugging Face LeRobot framework.

hipBLASLt Online GEMM Tuning

Learn how to improve model performance with hipBLASLt online tuning merged into LLM framework

Getting Started with FlyDSL Nightly Wheels on ROCm

A practical guide to installing and using FlyDSL nightly wheels on ROCm for fast, Python-native GPU kernel development

Agentic Diagnosis for LLM Training at Scale

Explore how AI agents diagnose LLM training incidents — from RCCL hangs to throughput regressions — in one prompt with MaxText-Slurm.

MaxText-Slurm: Production-Grade LLM Training with Built-In Observability

MaxText-Slurm: A unified launch system for production-grade LLM training with observability on AMD GPU clusters.

Primus-Pipeline: A More Flexible and Scalable Pipeline Parallelism Implementation

Learn how to use our flexible and scalable pipeline parallelism framework with Primus backend and AMD hardware.

Stay informed

- Subscribe to our RSS feed (Requires an RSS reader available as browser plugins.)

- Signup for the ROCm newsletter

- View our blog statistics