Featured Posts

Running Variational Quantum Eigensolver with Qiskit Aer on AMD Instinct

A step-by-step guide to running GPU-accelerated VQE for quantum chemistry with Qiskit Aer on AMD Instinct using ROCm.

ROCm 7.13: Expanding Hardware, Tools, and Reach

Explore what's new in the ROCm 7.13 release, featuring expanded hardware support, GPU virtualization, enhanced developer tooling, and TheRock's modular packaging.

Reproducing the AMD MLPerf Inference v6.0 Submission Result

Provide instructions to potential customers and partners to verify our MLPerf Inference v6.0 submission result.

Utilizing AMD Instinct GPU Accelerators for Weather and Precipitation Forecasting with NeuralGCM

A showcase of how to run NeuralGCM, a hybrid GCM model, on AMD Instinct hardware, including an introduction, installation, inference, and plotting.

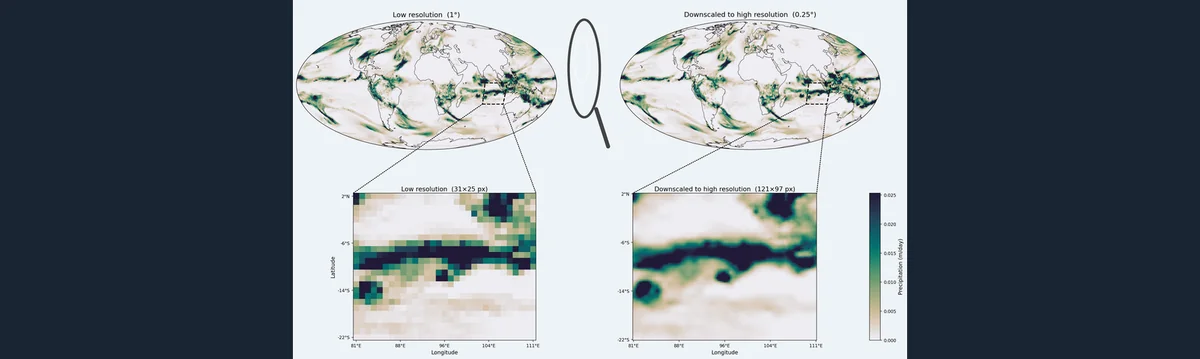

ORBIT-2 based Weather and Climate Downscaling and Downscaled Global Forecasts on AMD Instinct

A showcase for how to run GenCast’s weather prediction with ORBIT-2’s high-resolution downscaling on AMD Instinct hardware.

Adapting AIM LLMs For Specific Use Cases Through Fine-Tuning in AMD AI Workbench

Learn how to adapt and fine-tune an AIM LLM in AMD AI Workbench GUI for specialization or specific use cases.

Performance Profiling on AMD GPUs - Part 4: Fortran OpenMP Offload Edition

Guides developers through profiling and optimizing Fortran OpenMP GPU offload applications using ROCm tools

Out-of-the-Box ROLL Support on AMD GPUs: Accelerating Reinforcement Learning at Scale

Learn how to run Alibaba's ROLL RL framework out-of-the-box on AMD Instinct™ GPUs with ROCm

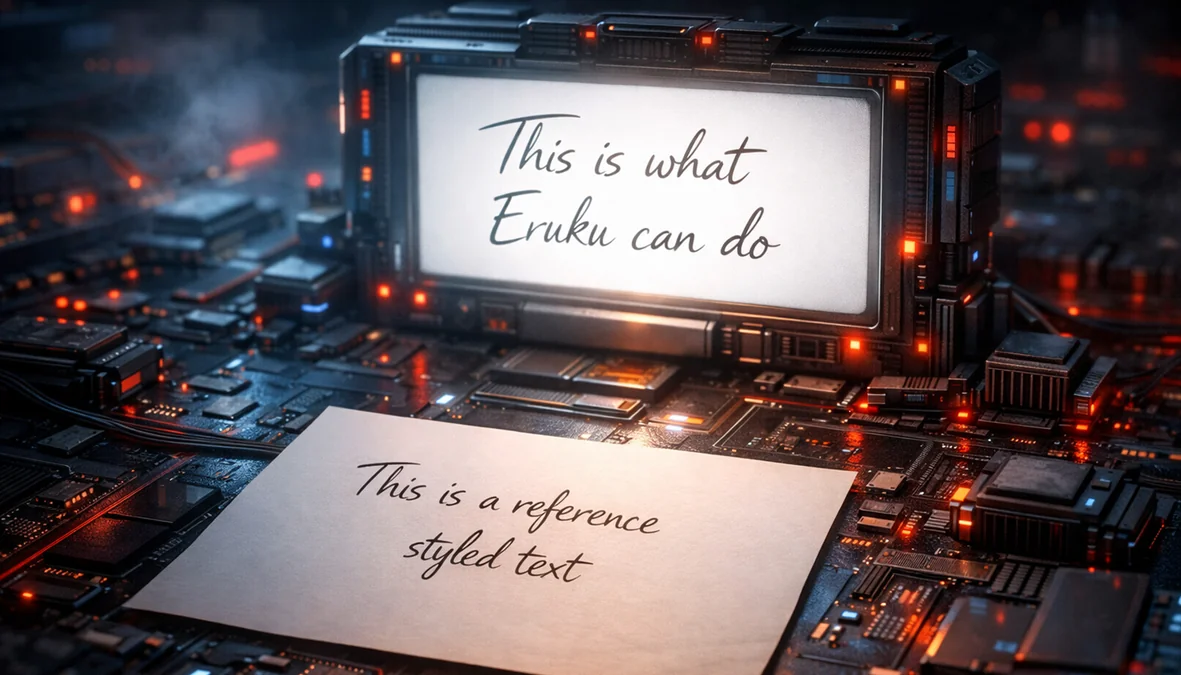

Styled Text Image Generation with Eruku on AMD

Hands-on, reproducible guide to train and run Eruku on LUMI supercomputer, powered by AMD Instinct MI250X GPUs.

Elevate Your LLM Inference: Autoscaling with Ray, ROCm 7.0.0, and SkyPilot

Learn how to use multi-node and multi-cluster autoscaling in the Ray framework on ROCm 7.0.0 with SkyPilot

Building Robotics Applications with Ryzen AI and ROS 2

This blog post gives a walkthrough of how to deploy a robotics application on the AI PC integrated with ROS - the robot operating system. We showcase Ryzen AI CVML Library to do perception tasks like depth estimation and develop a custom ROS 2 node which allows easy integration with the ROS ecosystem and standard components.

Quickly Developing Powerful Flash Attention Using TileLang on AMD Instinct MI300X GPU

Learn how to leverage TileLang to develop your own kernel. Explore the power to fully utilize AMD GPUs

Enabling Speculative Speculative Decoding on MI300X

This is an introduction of speculative speculative decoding method. We enable this method on the AMD Instinct MI300x GPUs and report the results.

AI Inference on AMD Ryzen™ AI Max Processor

Hands-on: run Qwen3.5 9B–122B on Ryzen™ AI Max+ with 128GB UMA and Ollama, with generation benchmarks and a clear UMA setup path on Ubuntu/ROCm.

Diffusion-based Atmospheric Downscaling on AMD Instinct GPUs

Read this blog post to learn about and understand the theory of downscaling models. Also learn how to run a particular model, CorrDiff, on AMD GPUs.

QuickReduce FP4 Quantization and Benchmarking on MI355

Learn how QuickReduce uses FP4 quantization to accelerate all-reduce communication and evaluate its performance on AMD Instinct MI355 GPUs.

Deep Dive Into 4-Wave Interleave FP8 GEMM

Learn how to build faster FP8 GEMM kernels on AMD CDNA™4 using 4-wave interleaving to hide memory latency and maximize Matrix Core utilization.

From Build to Benchmark: ONNX Model Serving with Triton Inference Server on AMD GPUs

Step-by-step guide to building, deploying, and benchmarking ONNX models with Triton Inference Server and MIGraphX on AMD GPUs

From Naive to Near-Peak: Building High-Performance GEMM Kernels with Gluon

Learn how a Gluon GEMM tutorial teaches profiling-driven AMD GPU optimization from FP16 baseline to BF8 and MXFP4 kernels.

vLLM-ATOM: Unlocking Native AMD Performance in the vLLM Ecosystem

Use ATOM as an out-of-tree vLLM plugin to keep vLLM compatibility while enabling AMD-optimized attention, model execution, and multi-model support including Kimi-K2.5.

Stay informed

- Subscribe to our RSS feed (Requires an RSS reader available as browser plugins.)

- Signup for the ROCm newsletter

- View our blog statistics

- View the ROCm Developer Hub

- Report an issue or request a feature

- We are eager to learn from our community! If you would like to contribute to the ROCm Blogs, please submit your technical blog for review at our GitHub. Blog creation can be started through our GitHub user guide.