AI - Applications & Models - Page 4#

Foundations of Molecular Generation with GP-MoLFormer on AMD Instinct MI300X Accelerators

Explore molecular generation with GP-MoLFormer on AMD MI300X GPUs, including sequence-based modeling, inference, and property-guided design.

Nitro-AR: A Compact AR Transformer for High-Quality Image Generation

Nitro-AR is a compact E-MMDiT-based masked AR image generator matching diffusion quality with lower latency on AMD GPUs.

Athena-PRM: Enhancing Multimodal Reasoning with Data-Efficient Process Reward Models

Learn how to utilize a data-efficient Process Reward Model to enhance the reasoning ability of the Large Language/Multimodal Models.

Bridging the Last Mile: Deploying Hummingbird-XT for Efficient Video Generation on AMD Consumer-Grade Platforms

Learn how to use Hummingbird-XT and Hummingbird-XTX modelS to generate videos. Explore the video diffusion model acceleration solution, including dit distillation method and light VAE model.

High-Resolution Weather Forecasting with StormCast on AMD Instinct GPU Accelerators

A showcase for how to run high-resolution weather prediction models such as StormCast on AMD Instinct hardware.

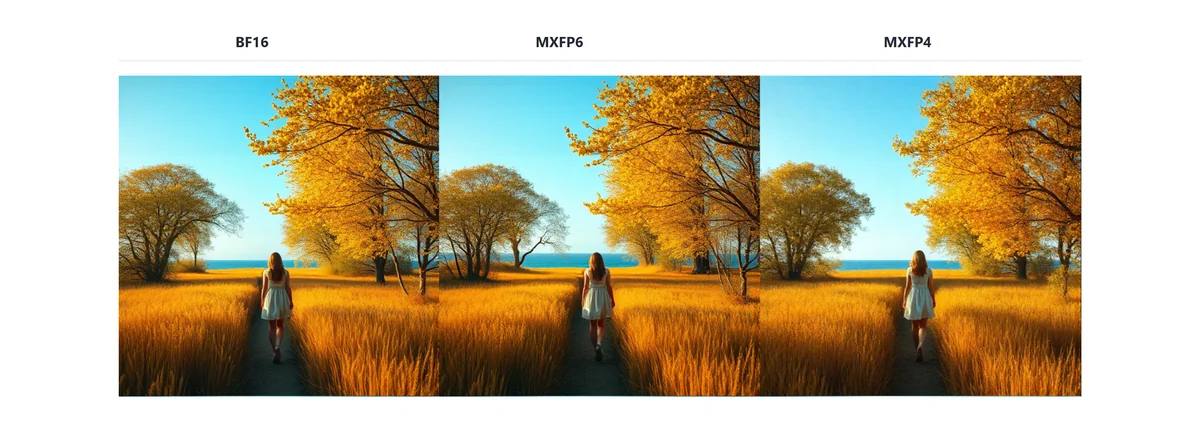

Breaking the Accuracy-Speed Barrier: How MXFP4/6 Quantization Revolutionizes Image and Video Generation

Explore how MXFP4/6, supported by AMD Instinct™ MI350 series GPUs, achieves BF16-comparable image and video generation quality.

ROCm Fork of MaxText: Structure and Strategy

Learn how the ROCm fork of MaxText mirrors upstream while enabling offline testing, minimal datasets, and platform-agnostic, decoupled workflows.

ROCm MaxText Testing — Decoupled (Offline) and Cloud-Integrated Modes

Learn how to run MaxText unit tests on AMD ROCm GPUs in offline and cloud modes for fast validation, clear reports, and reproducible workflows.

SparK: Query-Aware Unstructured Sparsity with Recoverable KV Cache Channel Pruning

In this blog we will discuss SparK, a training-free, plug-and-play method for KV cache compression in large language models (LLMs).

GEAK-Triton v2 Family of AI Agents: Kernel Optimization for AMD Instinct GPUs

Introducing GEAK Family - AI-driven agents that automate GPU kernel optimization for AMD Instinct GPUs with hardware-aware feedback

A Step-by-Step Walkthrough of Decentralized LLM Training on AMD GPUs

Learn how to train LLMs across decentralized clusters on AMD Instinct MI300 GPUs with DiLoCo and Prime—scale beyond one datacenter.

Medical Imaging on MI300X: SwinUNETR Inference Optimization

A practical guide to optimizing SwinUNETR inference on AMD Instinct™ MI300X GPUs for fast 3D segmentation of tumors in medical imaging.