Developers - Applications & Models#

Customizing Kernels with hipBLASLt TensileLite GEMM Tuning - Advanced User Guide

Master hipBLASLt TensileLite Tuning. Learn to build custom kernels that deliver 150%-250% faster GEMM performance on AMD Instinct™ MI300X GPUs

Training a Robotic Arm Using MuJoCo and JAX on AMD Hardware with ROCm™

A complete guide to training an RL-based pick-and-lift robotic arm in MuJoCo with JAX, running on AMD hardware via ROCm.

Edge-to-Cloud Robotics with AMD ROCm: From Data Collection to Real-Time Inference

This blog demonstrates a comprehensive Edge-to-Cloud robotics AI solution powered by the AMD ecosystem and the Hugging Face LeRobot framework.

hipBLASLt Online GEMM Tuning

Learn how to improve model performance with hipBLASLt online tuning merged into LLM framework

GROMACS Performance on AMD Instinct MI355X

Explore GROMACS molecular dynamics performance benchmarks on AMD Instinct MI355X GPUs with HIP acceleration.

Getting Started with ComfyUI on AMD Radeon™ RX 9000 Series GPUs

Learn how to set up and optimize ComfyUI on AMD Radeon RX 9000 GPUs with ROCm 7.1 — solve common issues and start generating.

LuminaSFT: Generating Synthetic Fine-Tuning Data for Small Language Models

Learn how task-specific synthetic data can improve small language model performance and explore results from the LuminaSFT study.

Unlocking Sparse Acceleration on AMD GPUs with hipSPARSELt

This blog post introduces semi-structured sparsity technology supported on AMD systems and explains how to use the corresponding library to leverage its benefit.

Digital Twins on AMD: Building Robotic Simulations Using Edge AI PCs

Explore how Ryzen AI MAX enables robotic simulation on a single AI PC and take your first step into digital twins.

Resilient Large-Scale Training: Integrating TorchFT with TorchTitan on AMD GPUs

Achieve resilient, checkpoint-less distributed training on AMD GPUs by integrating TorchFT with TorchTitan on Primus-SaFE.

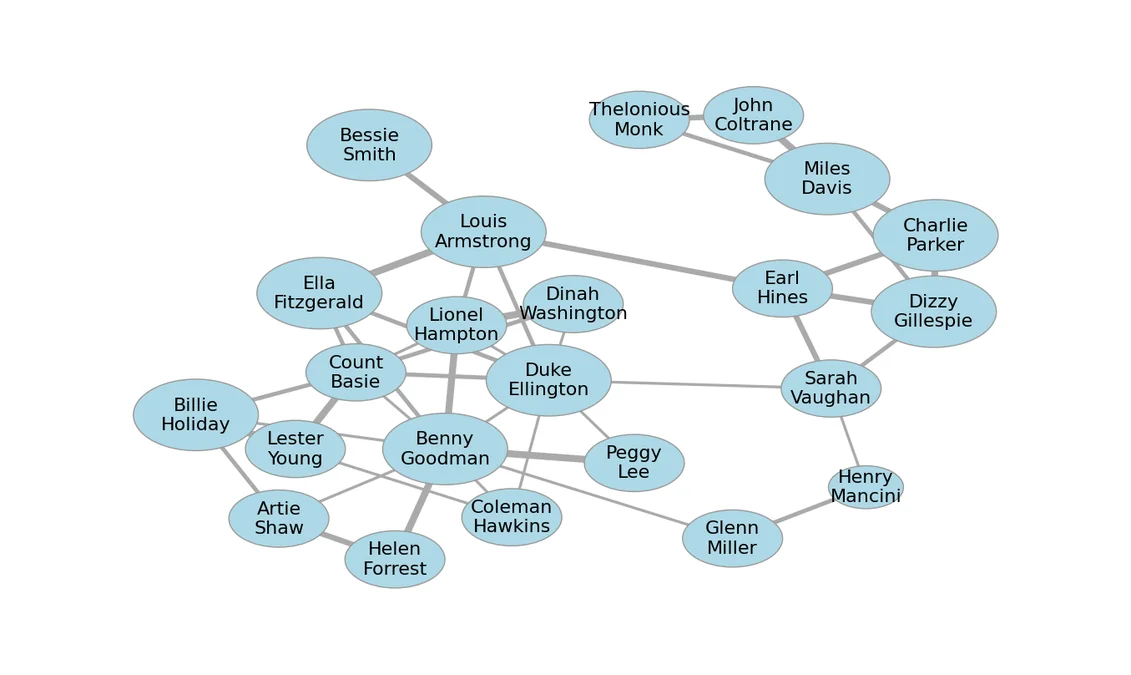

Accelerating Graph Layout with AI and ROCm on AMD GPUs

Case study of using AI coding agents to optimize graph layout using GPUs.

Installing AMD HIP-Enabled GROMACS on HPC Systems: A LUMI Supercomputer Case Study

Installing AMD HIP-Enabled GROMACS on HPC Systems: A LUMI Supercomputer Case Study