AI - Applications & Models - Page 2#

AMD Instinct™ GPUs MLPerf Inference v6.0 Submission

In this blog, we share the technical details of how we accomplish the results in our MLPerf Inference v6.0 submission.

Reproducing the AMD MLPerf Inference v6.0 Submission Result

Provide instructions to potential customers and partners to verify our MLPerf Inference v6.0 submission result.

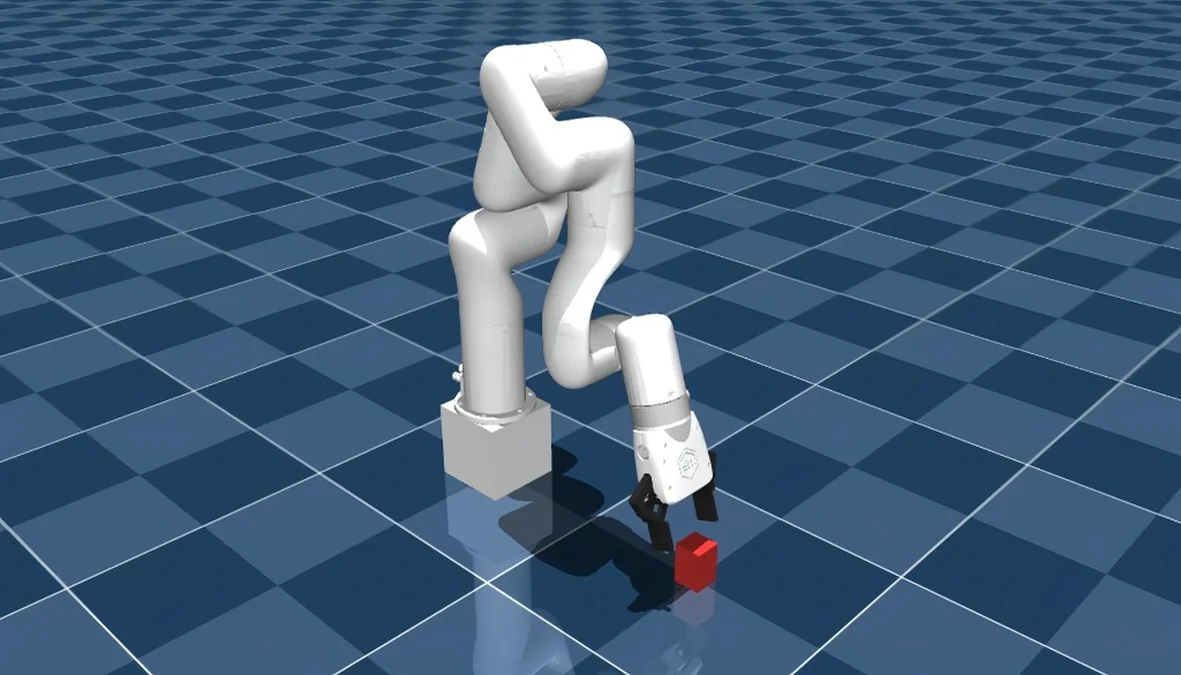

Training a Robotic Arm Using MuJoCo and JAX on AMD Hardware with ROCm™

A complete guide to training an RL-based pick-and-lift robotic arm in MuJoCo with JAX, running on AMD hardware via ROCm.

Programming Tensor Descriptors in Composable Kernel (CK)

Learn how to use TensorDescriptor in Composable Kernel (CK) to manage multi-dimensional data layouts and write efficient GPU kernels on AMD GPUs.

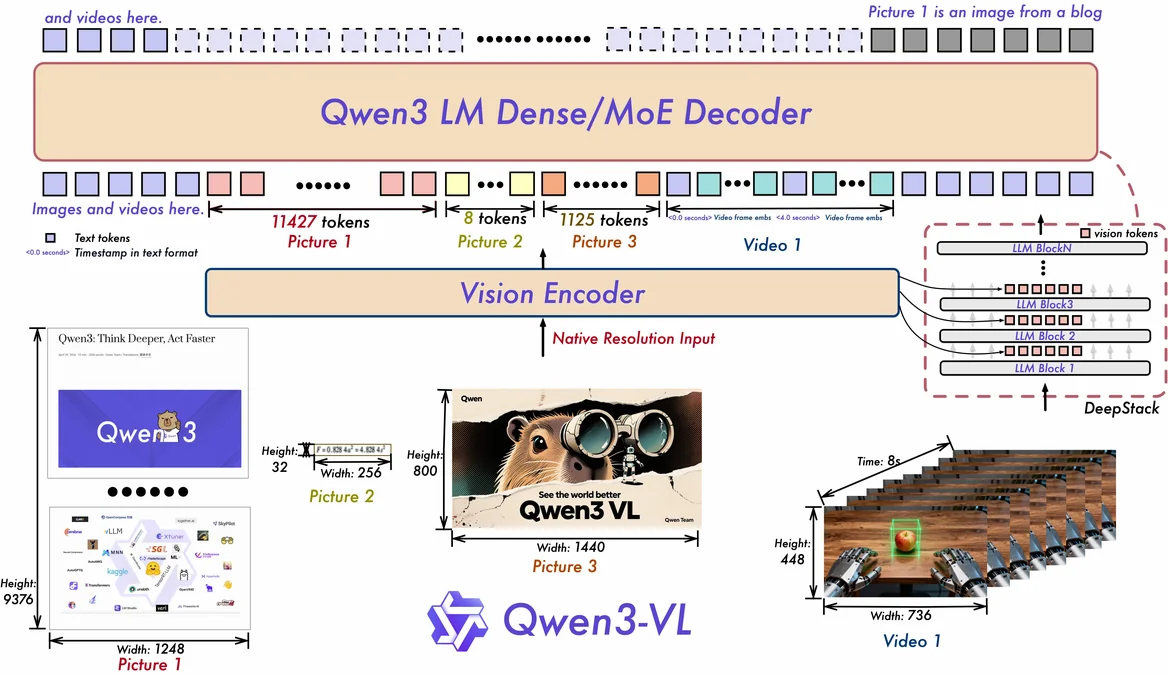

Engineering Qwen-VL for Production: Vision Module Architecture and Optimization Practices

Explore how to optimize Qwen-VL for production on AMD Instinct MI308X GPUs with ROCm, from vision module architecture to kernel fusion and deployment.

Accelerating Kimi-K2.5 on AMD Instinct™ MI300X: Optimizing Fused MoE with FlyDSL

Optimize Kimi-K2.5 on AMD MI300X using FlyDSL for fused MoE kernel acceleration. Achieve faster TTFT, TPOT, and throughput with our step-by-step optimization guide.

Edge-to-Cloud Robotics with AMD ROCm: From Data Collection to Real-Time Inference

This blog demonstrates a comprehensive Edge-to-Cloud robotics AI solution powered by the AMD ecosystem and the Hugging Face LeRobot framework.

hipBLASLt Online GEMM Tuning

Learn how to improve model performance with hipBLASLt online tuning merged into LLM framework

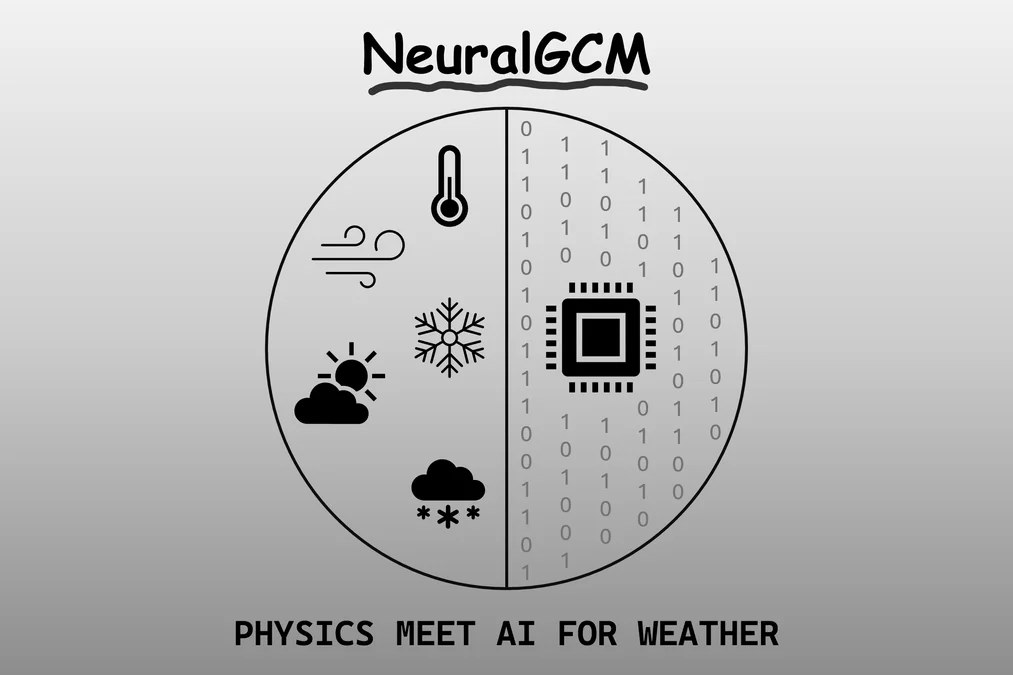

Utilizing AMD Instinct GPU Accelerators for Weather and Precipitation Forecasting with NeuralGCM

A showcase of how to run NeuralGCM, a hybrid GCM model, on AMD Instinct hardware, including an introduction, installation, inference, and plotting.

Getting Started with ComfyUI on AMD Radeon™ RX 9000 Series GPUs

Learn how to set up and optimize ComfyUI on AMD Radeon RX 9000 GPUs with ROCm 7.1 — solve common issues and start generating.

HPC Coding Agent - Part 3: MCP Tool for Profiling

Build an AI agent specialized in optimizing HPC workloads by connecting a Cline agent to expert-level AMD profiling tools via a custom MCP server.

Fine-Tuning AI Surrogate Models for Physics Simulations with Walrus on AMD Instinct GPU Accelerators

A showcase of fine-tuning the foundational physics simulation model Walrus on a new physics dataset using AMD Instinct hardware.