AI - Applications & Models#

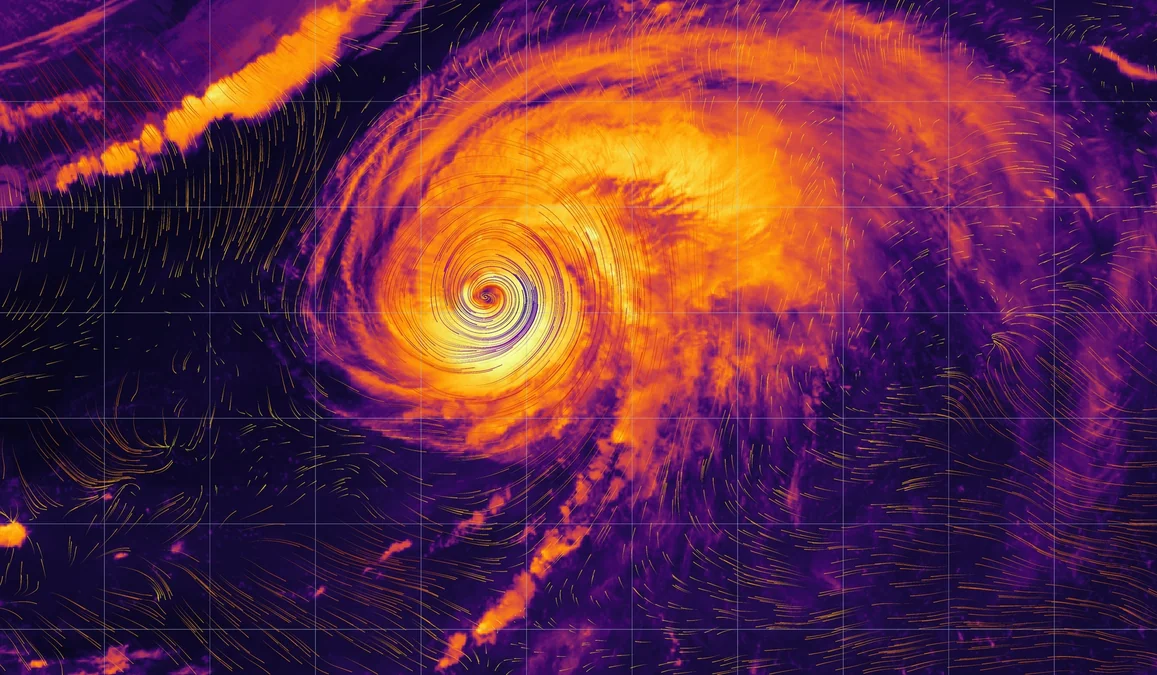

Diffusion-based Atmospheric Downscaling on AMD Instinct GPUs

Read this blog post to learn about and understand the theory of downscaling models. Also learn how to run a particular model, CorrDiff, on AMD GPUs.

QuickReduce FP4 Quantization and Benchmarking on MI355

Learn how QuickReduce uses FP4 quantization to accelerate all-reduce communication and evaluate its performance on AMD Instinct MI355 GPUs.

Semantic Fencing of Video Streams Using Embedding Splits from Vision Foundation Models

Learn how to semantically split vision datasets using foundation model embeddings on AMD GPUs to reduce leakage and improve evaluation.

Further Accelerating Kimi-K2.5 on AMD Instinct™ MI325X: W4A8 & W8A8 Quantization with AMD Quark

Quantize Kimi-K2.5 to W4A8 and W8A8 using AMD Quark and serve on MI325X with FlyDSL and AITER for further inference acceleration.

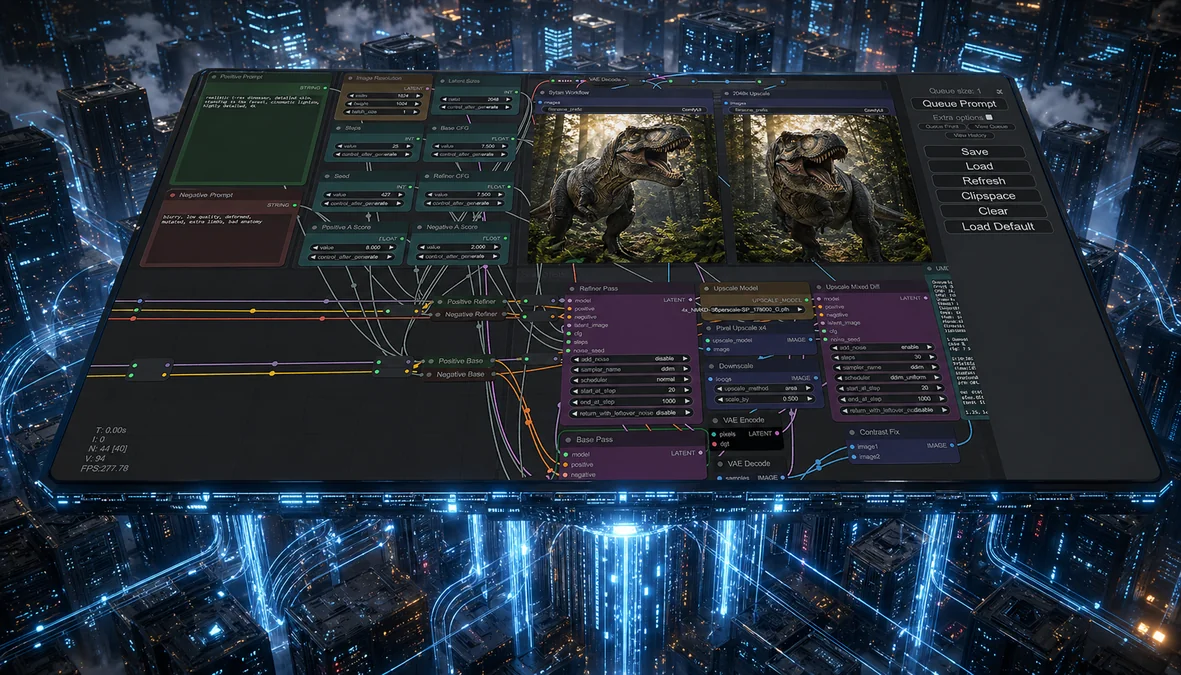

Accelerating ComfyUI Workflows on AMD Instinct™ MI355X GPUs with ROCm

We show that the MI355X delivers better performance than the B200 for ComfyUI after enabling PyTorch attention for gfx950.

AMD-Powered 3D Gaussian Splatting for Autonomous Driving Scenes

Run Street Gaussians on AMD Instinct MI300: migrate to latest gsplat, install on ROCm, and render dynamic street scenes.

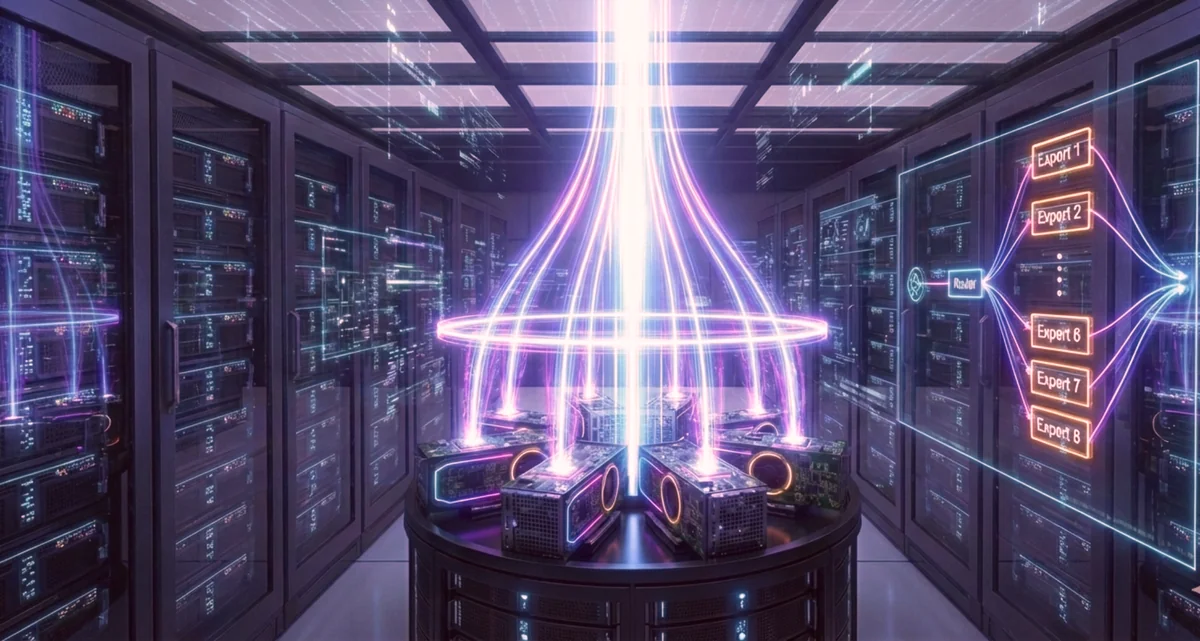

Accelerating Mixture-of-Experts Execution with FarSkip-Collective Models

Explore a new MoE architecture designed for native computation-communication overlap, enabling efficient distributed execution.

FLy: A New Paradigm for Speculative Decoding — Accepting Semantically Correct Drafts Beyond Exact Match

This blog explores a new training-free loosely speculative decoding method, that can accept mismatches that are semantically valid and speedup original SPD method.

Serving CTR Recommendation Models with Triton Inference Server using the ONNX Runtime Backend

Learn how to deploy AI models on AMD GPUs with Triton Inference Server, now supporting ONNX Runtime and Python backends, and see performance benchmarks.

FlashInfer on ROCm: High‑Throughput Prefill Attention via AITER

FlashInfer is an open-source library for accelerating LLM serving that is now supported by ROCm.

Customizing Kernels with hipBLASLt TensileLite GEMM Tuning - Advanced User Guide

Master hipBLASLt TensileLite Tuning. Learn to build custom kernels that deliver 150%-250% faster GEMM performance on AMD Instinct™ MI300X GPUs

Deploy and Customize AMD Solution Blueprints

Learn how to deploy and customize AMD Solution Blueprints — from default deployment to swapping and reusing AMD Inference Microservices across multiple blueprints.