AI Blogs - Page 13#

Running ComfyUI on AMD Instinct

This blog shows how to deploy ComfyUI on AMD Instinct GPUs. The blog explains what ComfyUI is and how it works.

Accelerating FastVideo on AMD GPUs with TeaCache

Enabling ROCm support for FastVideo inference using TeaCache on AMD Instinct GPUs, accelerating video generation with optimized backends

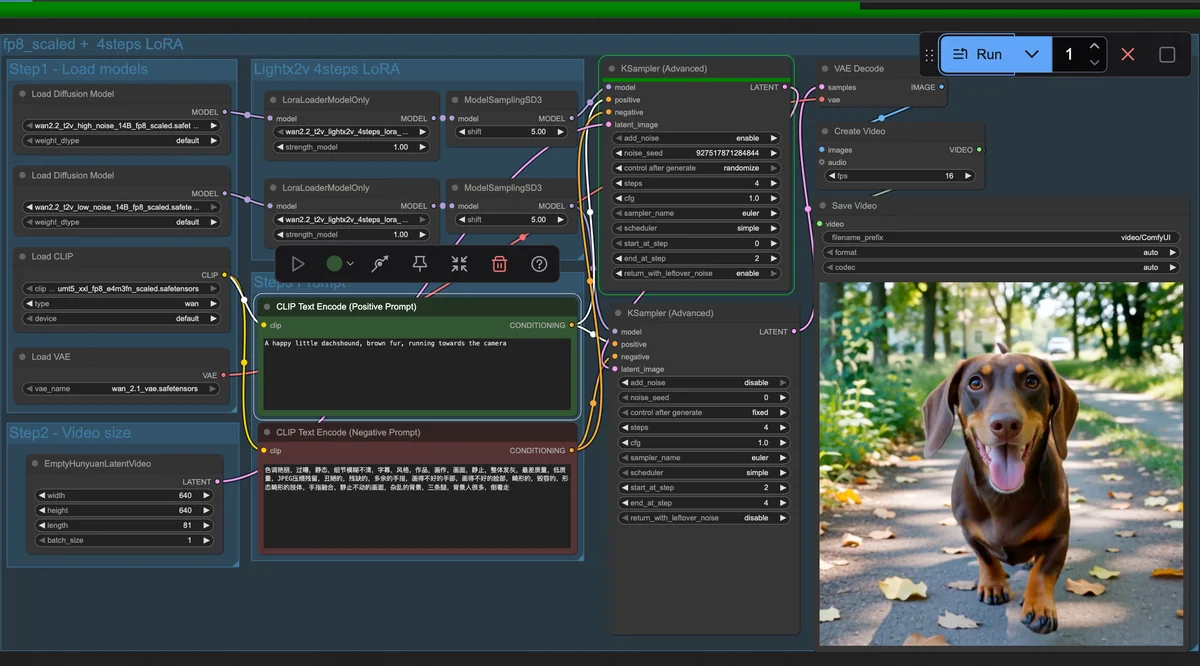

Wan2.2 Fine-Tuning: Tailoring an Advanced Video Generation Model on a Single GPU

Fine-tune Wan2.2 for video generation on a single AMD Instinct MI300X GPU with ROCm and DiffSynth.

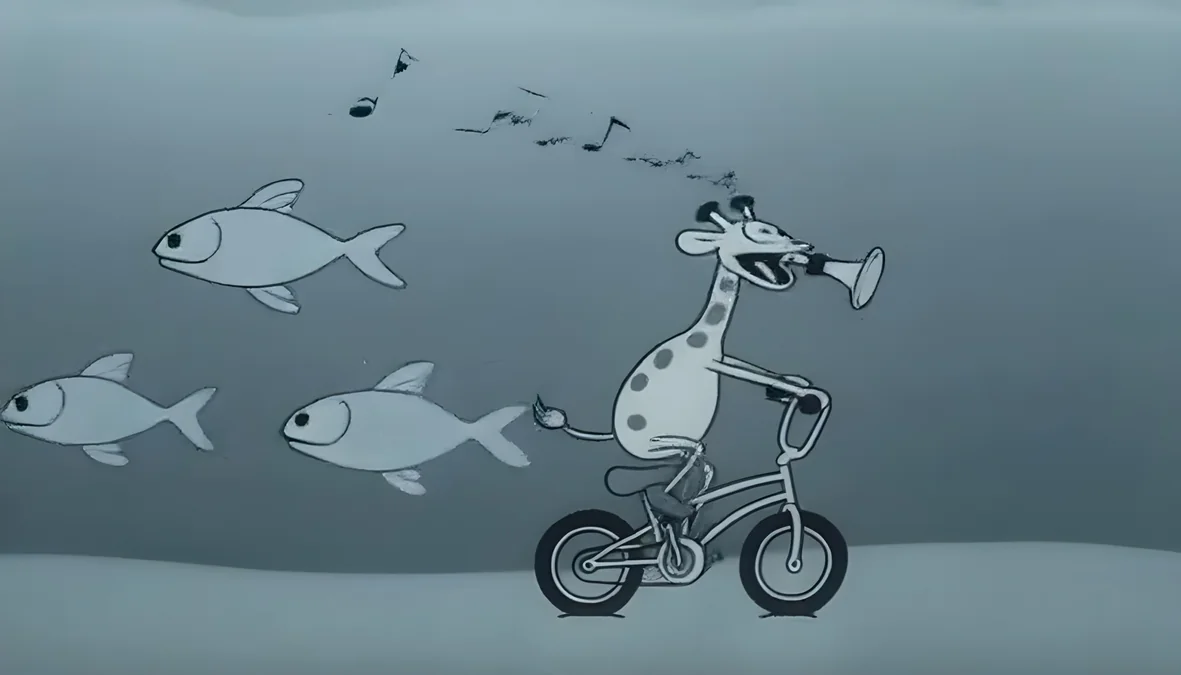

All-in-One Video Editing with VACE on AMD Instinct GPUs

This blog showcases AMD hardware powering cutting-edge text-driven video editing models through an all-in-one solution.

Introducing Instella-Math: Fully Open Language Model with Reasoning Capability

Instella-Math is AMD’s 3B reasoning model, trained on 32 MI300X GPUs with open weights, optimized for logic, math, and chain-of-thought tasks.

Running ComfyUI in Windows with ROCm on WSL

Run ComfyUI on Windows with ROCm and WSL to harness Radeon GPU power for local AI tasks like Stable Diffusion—no dual-boot needed

Day 0 Developer Guide: Running the Latest Open Models from OpenAI on AMD AI Hardware

Day 0 support across our AI hardware ecosystem from our flagship AMD InstinctTM MI355X and MI300X GPUs, AMD Radeon™ AI PRO R700 GPUs and AMD Ryzen™ AI Processors

AMD Hummingbird Image to Video: A Lightweight Feedback-Driven Model for Efficient Image-to-Video Generation

We present AMD Hummingbird, offering a two-stage distillation framework for efficient, high-quality text-to-video generation using compact models.

GEAK: Introducing Triton Kernel AI Agent & Evaluation Benchmarks

AMD introduces GEAK, an AI agent for generating optimized Triton GPU kernels, achieving up to 63% accuracy and up to 2.59× speedups on MI300X GPUs.

Accelerating Parallel Programming in Python with Taichi Lang on AMD GPUs

This blog provides a how-to guide on installing and programming with Taichi Lang on AMD Instinct GPUs.

Graph Neural Networks at Scale: DGL with ROCm on AMD Hardware

Accelerate Graph Deep Learning on AMD GPUs with DGL and ROCm—scale efficiently with open tools and optimized performance.

Avoiding LDS Bank Conflicts on AMD GPUs Using CK-Tile Framework

This blog shows how CK-Tile’s XOR-based swizzle optimizes shared memory access in GEMM kernels on AMD GPUs by eliminating LDS bank conflicts