AI Blogs#

Enabling Speculative Speculative Decoding on MI300X

This is an introduction of speculative speculative decoding method. We enable this method on the AMD Instinct MI300x GPUs and report the results.

AI Inference on AMD Ryzen™ AI Max Processor

Hands-on: run Qwen3.5 9B–122B on Ryzen™ AI Max+ with 128GB UMA and Ollama, with generation benchmarks and a clear UMA setup path on Ubuntu/ROCm.

From Build to Benchmark: ONNX Model Serving with Triton Inference Server on AMD GPUs

Step-by-step guide to building, deploying, and benchmarking ONNX models with Triton Inference Server and MIGraphX on AMD GPUs

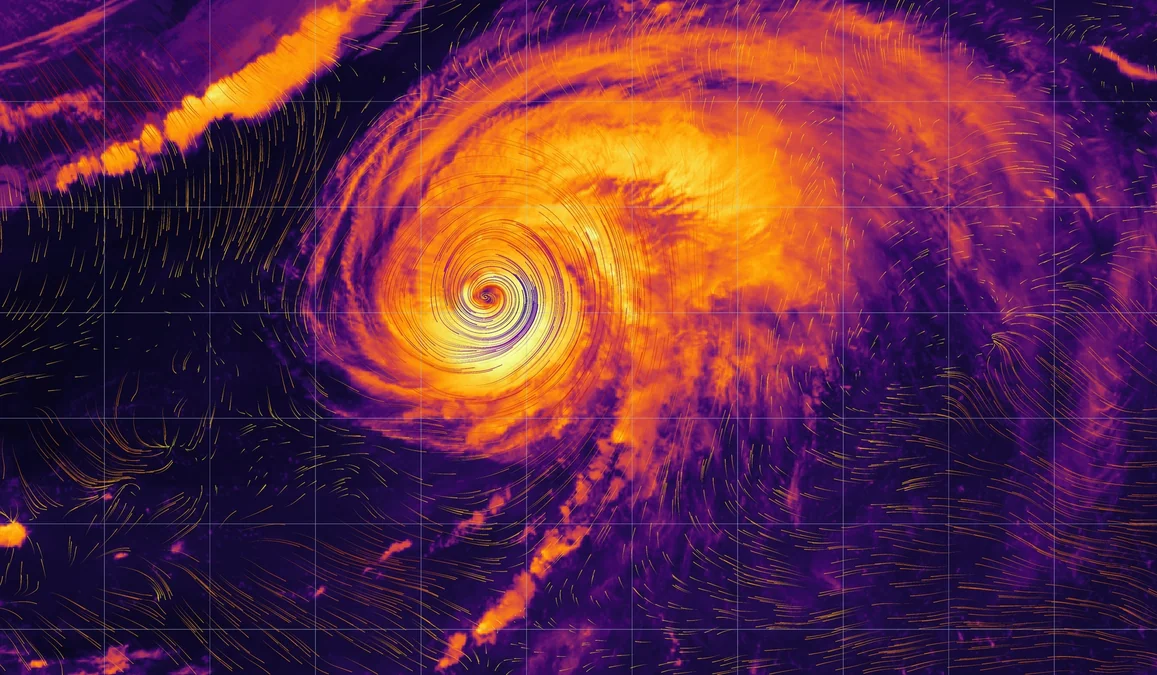

Diffusion-based Atmospheric Downscaling on AMD Instinct GPUs

Read this blog post to learn about and understand the theory of downscaling models. Also learn how to run a particular model, CorrDiff, on AMD GPUs.

QuickReduce FP4 Quantization and Benchmarking on MI355

Learn how QuickReduce uses FP4 quantization to accelerate all-reduce communication and evaluate its performance on AMD Instinct MI355 GPUs.

Semantic Fencing of Video Streams Using Embedding Splits from Vision Foundation Models

Learn how to semantically split vision datasets using foundation model embeddings on AMD GPUs to reduce leakage and improve evaluation.

Further Accelerating Kimi-K2.5 on AMD Instinct™ MI325X: W4A8 & W8A8 Quantization with AMD Quark

Quantize Kimi-K2.5 to W4A8 and W8A8 using AMD Quark and serve on MI325X with FlyDSL and AITER for further inference acceleration.

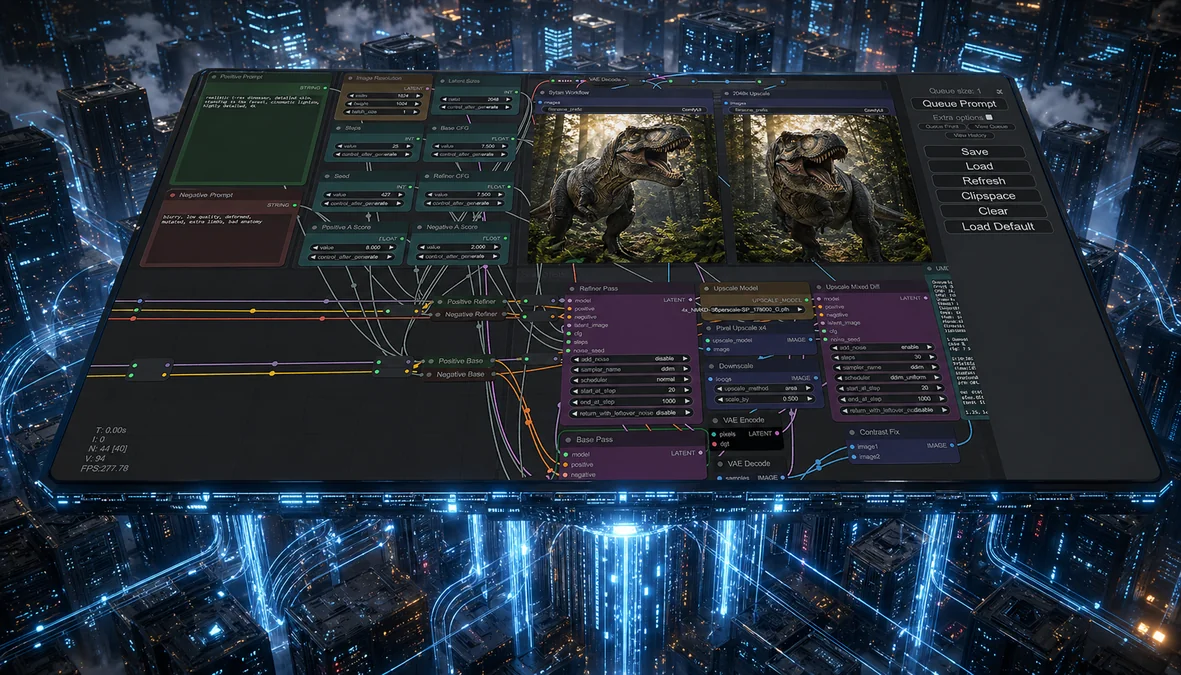

Accelerating ComfyUI Workflows on AMD Instinct™ MI355X GPUs with ROCm

We show that the MI355X delivers better performance than the B200 for ComfyUI after enabling PyTorch attention for gfx950.

vLLM-ATOM: Unlocking Native AMD Performance in the vLLM Ecosystem

Use ATOM as an out-of-tree vLLM plugin to keep vLLM compatibility while enabling AMD-optimized attention, model execution, and multi-model support including Kimi-K2.5.

AMD-Powered 3D Gaussian Splatting for Autonomous Driving Scenes

Run Street Gaussians on AMD Instinct MI300: migrate to latest gsplat, install on ROCm, and render dynamic street scenes.

Accelerating Mixture-of-Experts Execution with FarSkip-Collective Models

Explore a new MoE architecture designed for native computation-communication overlap, enabling efficient distributed execution.

TraceLens: Democratizing AI Performance Analysis

Explore how TraceLens automates profiler trace analysis to pinpoint bottlenecks and optimize AI workloads.