AI Blogs - Page 2#

Primus Projection: Estimate Memory and Performance Before You Train

Learn how to use the Primus projection tool to estimate memory and performance for large-scale LLM training on AMD Instinct™ accelerator platforms.

Styled Text Image Generation with Eruku on AMD

Hands-on, reproducible guide to train and run Eruku on LUMI supercomputer, powered by AMD Instinct MI250X GPUs.

Getting Started with FlyDSL Nightly Wheels on ROCm

A practical guide to installing and using FlyDSL nightly wheels on ROCm for fast, Python-native GPU kernel development

FLy: A New Paradigm for Speculative Decoding — Accepting Semantically Correct Drafts Beyond Exact Match

This blog explores a new training-free loosely speculative decoding method, that can accept mismatches that are semantically valid and speedup original SPD method.

Serving CTR Recommendation Models with Triton Inference Server using the ONNX Runtime Backend

Learn how to deploy AI models on AMD GPUs with Triton Inference Server, now supporting ONNX Runtime and Python backends, and see performance benchmarks.

FlashInfer on ROCm: High‑Throughput Prefill Attention via AITER

FlashInfer is an open-source library for accelerating LLM serving that is now supported by ROCm.

Customizing Kernels with hipBLASLt TensileLite GEMM Tuning - Advanced User Guide

Master hipBLASLt TensileLite Tuning. Learn to build custom kernels that deliver 150%-250% faster GEMM performance on AMD Instinct™ MI300X GPUs

Deploy and Customize AMD Solution Blueprints

Learn how to deploy and customize AMD Solution Blueprints — from default deployment to swapping and reusing AMD Inference Microservices across multiple blueprints.

AMD Instinct™ GPUs MLPerf Inference v6.0 Submission

In this blog, we share the technical details of how we accomplish the results in our MLPerf Inference v6.0 submission.

Reproducing the AMD MLPerf Inference v6.0 Submission Result

Provide instructions to potential customers and partners to verify our MLPerf Inference v6.0 submission result.

Leveraging AMD AI Workbench and Autoscaling to Scale LLM Inference for Optimal Resource Utilization

Learn how to use the AMD AI Workbench GUI and AIM Engine CLI capabilities to enable and configure autoscaling for your AI workloads.

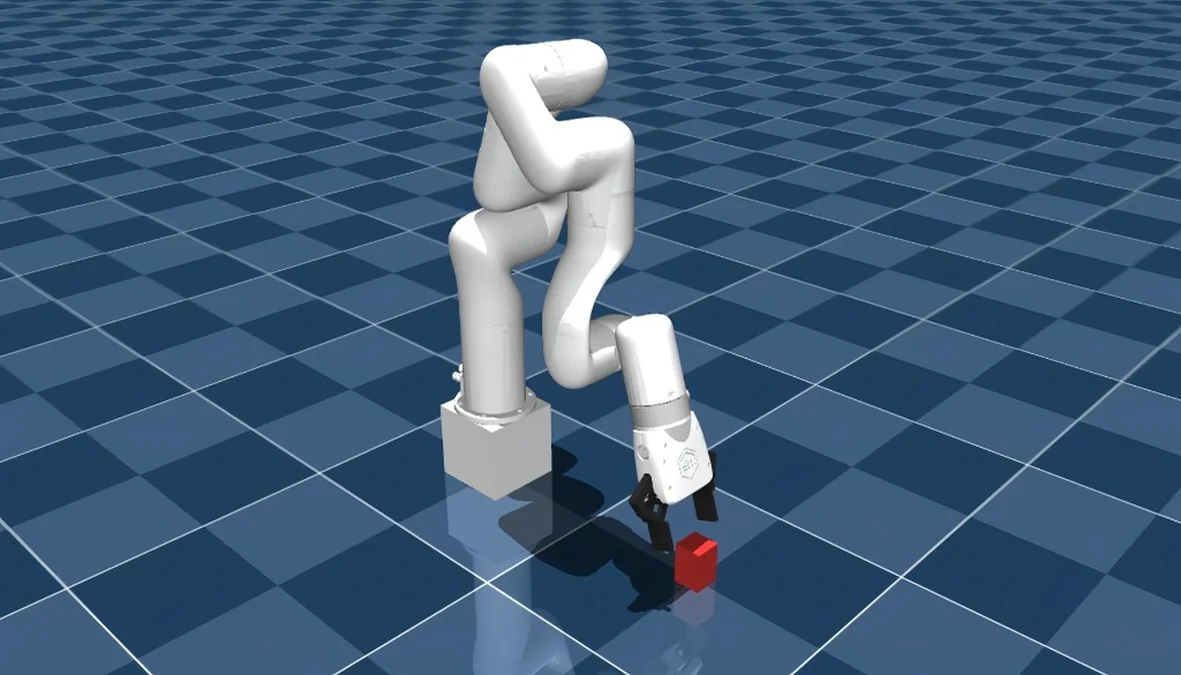

Training a Robotic Arm Using MuJoCo and JAX on AMD Hardware with ROCm™

A complete guide to training an RL-based pick-and-lift robotic arm in MuJoCo with JAX, running on AMD hardware via ROCm.