Developers Blogs#

ROCm 7.13: Expanding Hardware, Tools, and Reach

Explore what's new in the ROCm 7.13 release, featuring expanded hardware support, GPU virtualization, enhanced developer tooling, and TheRock's modular packaging.

vLLM-ATOM: Unlocking Native AMD Performance in the vLLM Ecosystem

Use ATOM as an out-of-tree vLLM plugin to keep vLLM compatibility while enabling AMD-optimized attention, model execution, and multi-model support including Kimi-K2.5.

Primus Projection: Estimate Memory and Performance Before You Train

Learn how to use the Primus projection tool to estimate memory and performance for large-scale LLM training on AMD Instinct™ accelerator platforms.

Getting Started with FlyDSL Nightly Wheels on ROCm

A practical guide to installing and using FlyDSL nightly wheels on ROCm for fast, Python-native GPU kernel development

Customizing Kernels with hipBLASLt TensileLite GEMM Tuning - Advanced User Guide

Master hipBLASLt TensileLite Tuning. Learn to build custom kernels that deliver 150%-250% faster GEMM performance on AMD Instinct™ MI300X GPUs

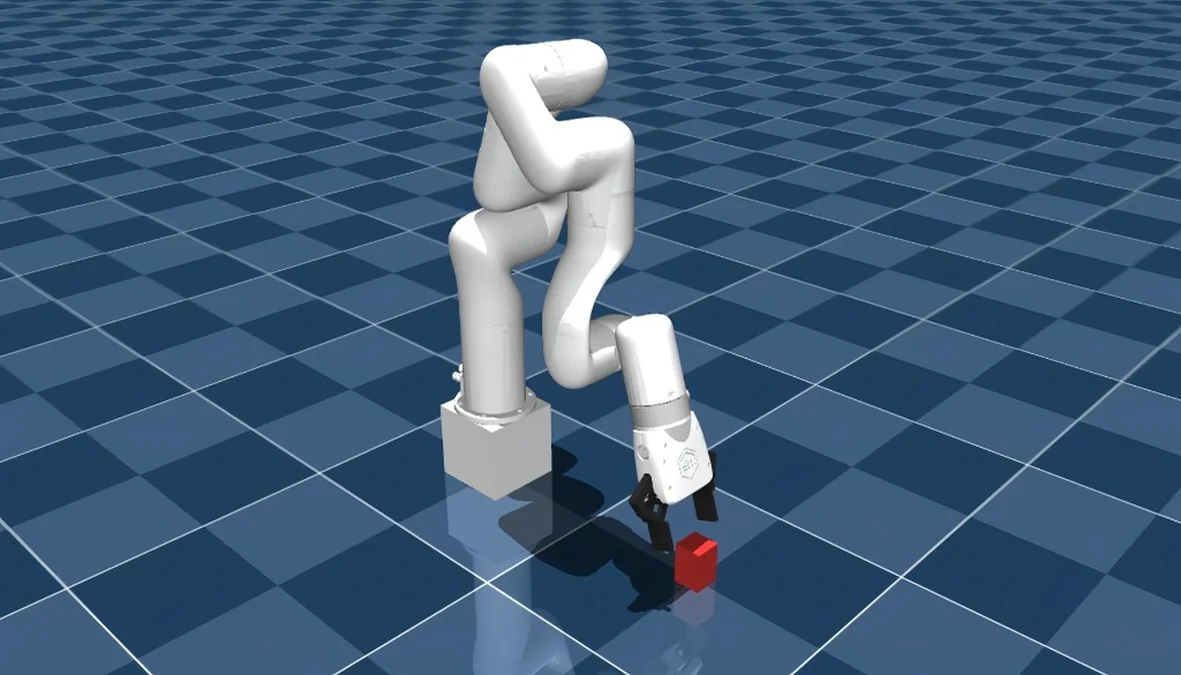

Training a Robotic Arm Using MuJoCo and JAX on AMD Hardware with ROCm™

A complete guide to training an RL-based pick-and-lift robotic arm in MuJoCo with JAX, running on AMD hardware via ROCm.

Edge-to-Cloud Robotics with AMD ROCm: From Data Collection to Real-Time Inference

This blog demonstrates a comprehensive Edge-to-Cloud robotics AI solution powered by the AMD ecosystem and the Hugging Face LeRobot framework.

hipBLASLt Online GEMM Tuning

Learn how to improve model performance with hipBLASLt online tuning merged into LLM framework

GROMACS Performance on AMD Instinct MI355X

Explore GROMACS molecular dynamics performance benchmarks on AMD Instinct MI355X GPUs with HIP acceleration.

Agentic Diagnosis for LLM Training at Scale

Explore how AI agents diagnose LLM training incidents — from RCCL hangs to throughput regressions — in one prompt with MaxText-Slurm.

Getting Started with ComfyUI on AMD Radeon™ RX 9000 Series GPUs

Learn how to set up and optimize ComfyUI on AMD Radeon RX 9000 GPUs with ROCm 7.1 — solve common issues and start generating.

MaxText-Slurm: Production-Grade LLM Training with Built-In Observability

MaxText-Slurm: A unified launch system for production-grade LLM training with observability on AMD GPU clusters.